Cisco Unified AI Assistant

Designing the AI layer that connects Cisco's entire product portfolio

I led the design of the Unified Cisco AI Assistant, the cross-product intelligence layer that became the foundation for AI Canvas, Cisco's first generative UI for IT operations, announced at Cisco Live 2025.

AI Product Designer

Scope

Impacts 10+ Cisco products across Security, Networking, Observability

Cross-org collaboration with 20+ product teams

Shipped

Announced at Cisco Live San Diego, June 2025 with early access in August 2025

About

Enterprise IT teams weren't struggling with a lack of tools; they were struggling with too many.

Resolving a single cross-domain issue meant navigating 15 or more product dashboards: Meraki for network topology, ThousandEyes for path performance, XDR for security events, Duo for authentication, Firewall for policy, Splunk for application data. Each tool worked in isolation. Teams were spending 70% of troubleshooting time just gathering and correlating data rather than fixing anything.

Nearly 64% of organizations were projected to face IT skills shortages by 2026, while the volume and complexity of work kept growing. The problem wasn't a lack of AI tools. It was a lack of AI that could reason holistically across domains, treating a security event and a network anomaly as parts of the same story rather than incidents in separate systems.

Every Cisco product already had some form of AI assistance. The problem was that those assistants were isolated, each expert in its own domain, none able to pass context across a boundary. A security incident with a network root cause required a manual hand-off between two teams, with all the context loss that implied. Agents couldn't share context or coordinate. There was no connective tissue between them.

My framing wasn't how do we build a better AI assistant? It was: how do we design the connective tissue, the orchestration layer, between the assistants that already exist?

That question led to two connected design challenges: the interaction model for the Unified AI Assistant itself, and the workspace model for how teams collaborate around it, which became AI Canvas.

Strategy

Process

We shadowed real-time incident response sessions with NetOps engineers, SecOps analysts, and Tier 1 to Tier 3 support teams at Fortune 50 companies. The finding was consistent: the bottleneck was never analysis. It was orchestration. Engineers needed 3 to 5 teams on average to resolve a cross-domain issue, with no shared context and no cross-functional alignment on what resolved even meant until someone in tier 3 finally had the full picture.

Foundational research for understanding how IT teams actually work

I designed for four design principles: No blank canvases, AI proactively loads context, never an empty state. Human-in-the-loop, all actions require approval with visible reasoning. Shared intelligence, persistent workspaces multiple teams can access. Progressive disclosure, high-level insights first, detail on demand.

Defining the interaction model

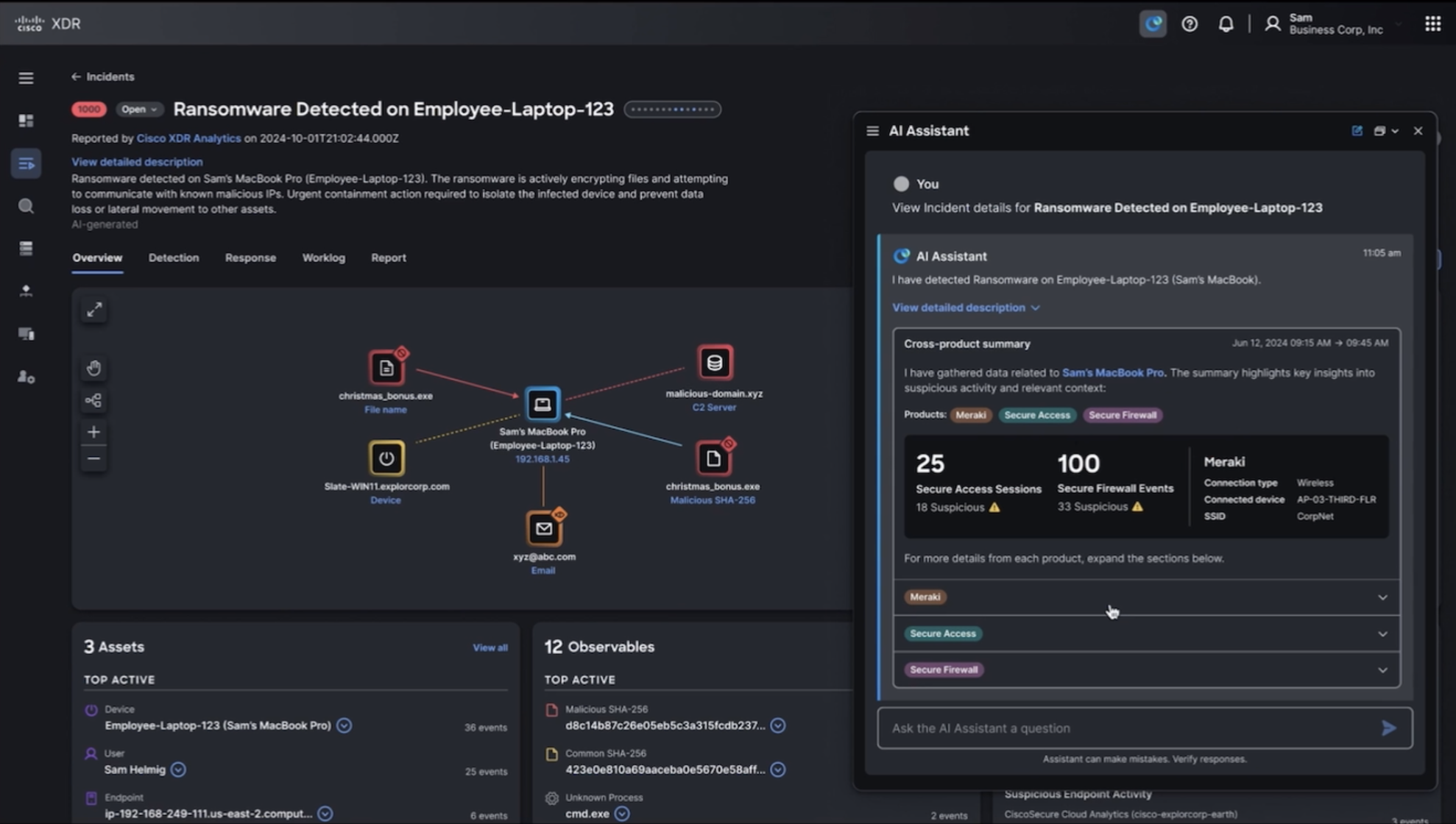

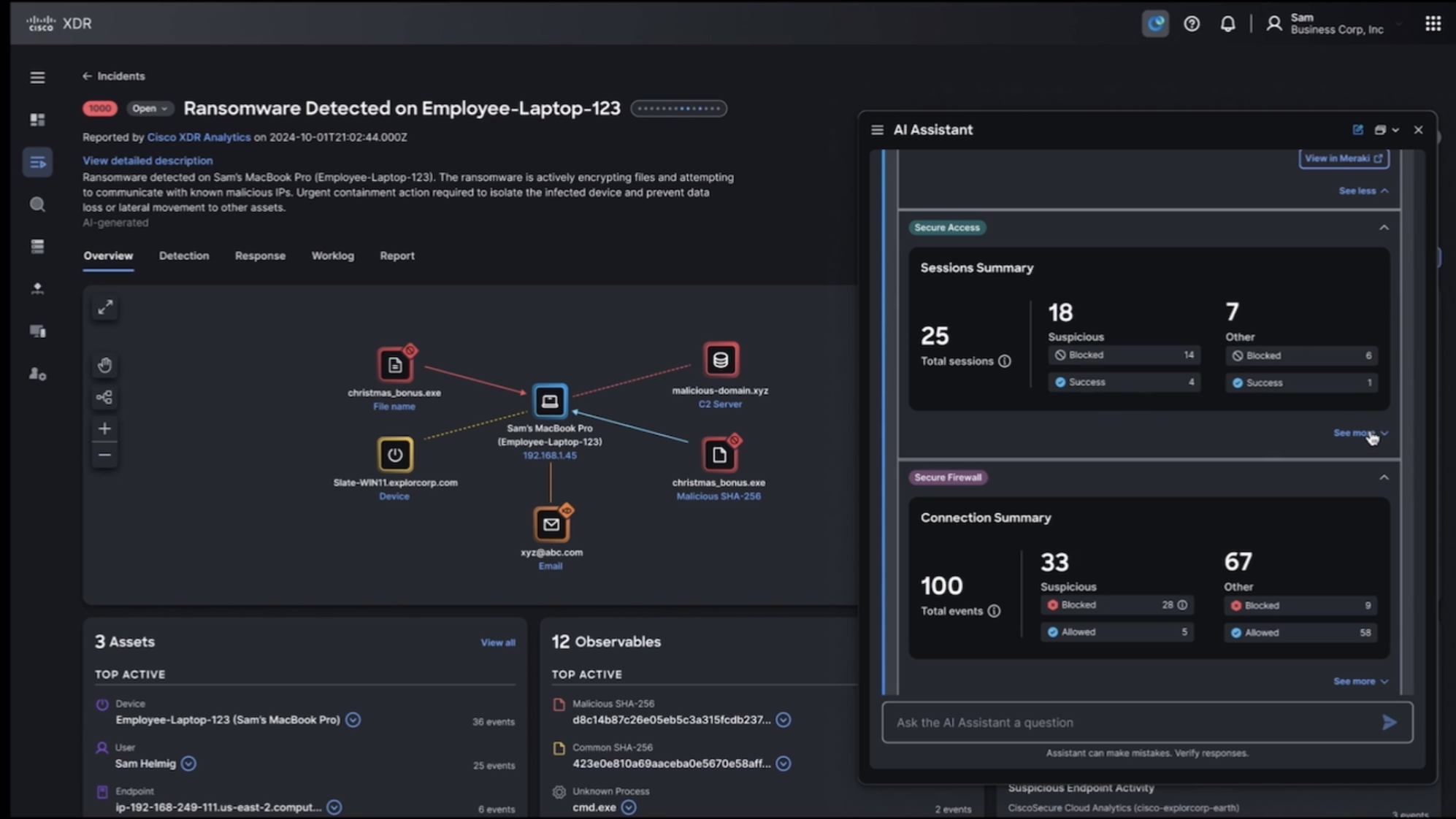

One of the most significant design challenges was making multi-product data correlation legible without being overwhelming. When a user asks "why is the VPN slow?", the answer draws from five or more products simultaneously. I designed a card system where each insight, metric, or action surfaces as a distinct card with clear data source attribution. The user always knows which product contributed which piece of context, and why it's relevant to the current investigation.

I also designed the orchestration patterns that show which AI skills are active during a response: the "thinking" states "Checking Meraki for client details... Analyzing Firewall logs... Correlating with ThousandEyes data..." making the AI's reasoning visible rather than opaque. This was a deliberate trust design decision - in high-stakes operational contexts, users need to see the work, not just the answer.

Designing the cross-product skills architecture

I prototyped two end-to-end flows.

The threat response flow auto-starts when XDR flags an incident and opens a Unified AI Assistant session that pulls data from Meraki, Firewall, Secure Access, Duo, and Identity Intelligence to surface cross-product correlations. AI highlights observables (malicious IPs, domains, behavioral anomalies) with progressive disclosure from summary to detailed evidence.

The network troubleshooting flow diagnoses app performance issues by correlating ThousandEyes path metrics, Meraki client connectivity, Catalyst Center topology, Splunk logs, and ISE authentication into a unified root-cause analysis. Natural-language filters (e.g., "correlate traffic from [client] to [server IP]") let engineers refine cross-product queries without tool-specific syntax.

Threat response and network troubleshooting flows

Cisco Unified AI Assistant threat response prototype.

I built fully working coded prototypes using Cursor, Claude, Cisco's internal AI Assistant API, Meraki OpenAPI, and XDR. Engineers and stakeholders could interact with real data. Feasibility conversations that normally happen late in development happened early, when they were cheap to answer.

Building functional prototypes, not mockups

Cisco Unified AI Assistant and AI Canvas fully functional prototype.

Improvements

1

The first version of the workspace assumed a predictable layout, a known set of card types in a logical order. Testing showed that the most valuable moments were the unexpected correlations: a Meraki network anomaly that turned out to be the root cause of an XDR security alert no one had connected. The layout system needed to prioritize AI-identified connections over simple chronological or product-category ordering.

2

The second pivot was about autonomy. Early prototypes offered aggressive automation, the AI would propose a fix and suggest executing it immediately. Engineers consistently pushed back, not because they didn't trust the diagnosis, but because they needed to understand the change before authorizing it. The pattern that emerged: propose, explain, authorize, execute, log. That sequence became the foundation for all AI-driven actions in the experience.

Outcomes

AI Canvas was announced at Cisco Live San Diego in June 2025 as the centerpiece of Cisco's AgenticOps strategy, entering early access in August 2025. It is Cisco's first generative UI for cross-domain IT operations.

In usability testing with 9+ IT engineers: 95% completed cross-product troubleshooting without training. Average time to understand incident context dropped from 23 minutes to under 3 minutes. Every participant preferred the collaborative model over traditional ticket escalation.

At the system level: approximately 40% reduction in mean time to insight in cross-product investigations. 60% improvement in trust and explainability ratings. 89% reduction in tool-switching during troubleshooting.

Reflections

The hardest part of this project wasn't the design, it was the alignment. When you're designing an orchestration layer across 10+ products owned by 20+ teams, every design decision is also a political decision for which product's data surfaces first, whose agent gets invoked by default, whose workflow gets simplified and whose gets disrupted. I learned that the design artifact alone can't resolve that kind of tension. What worked was building functional prototypes that made the tradeoffs tangible where stakeholders could interact with multiple versions and see the difference. The prototype became the negotiation tool.

The other lesson was about trust in usability testing. With 9 participants, we saw a clear signal: 95% task completion, context time from 23 minutes to under 3 minutes. But the most useful insight didn't come from the metrics as much as it did from watching a Tier 1 engineer hesitate before authorizing an AI-recommended action, then scroll up to re-read the reasoning. That moment shaped the "propose, explain, authorize, execute, log" pattern more than any of the quantitative data did. Qualitative observation at the right moment matters more than sample size.